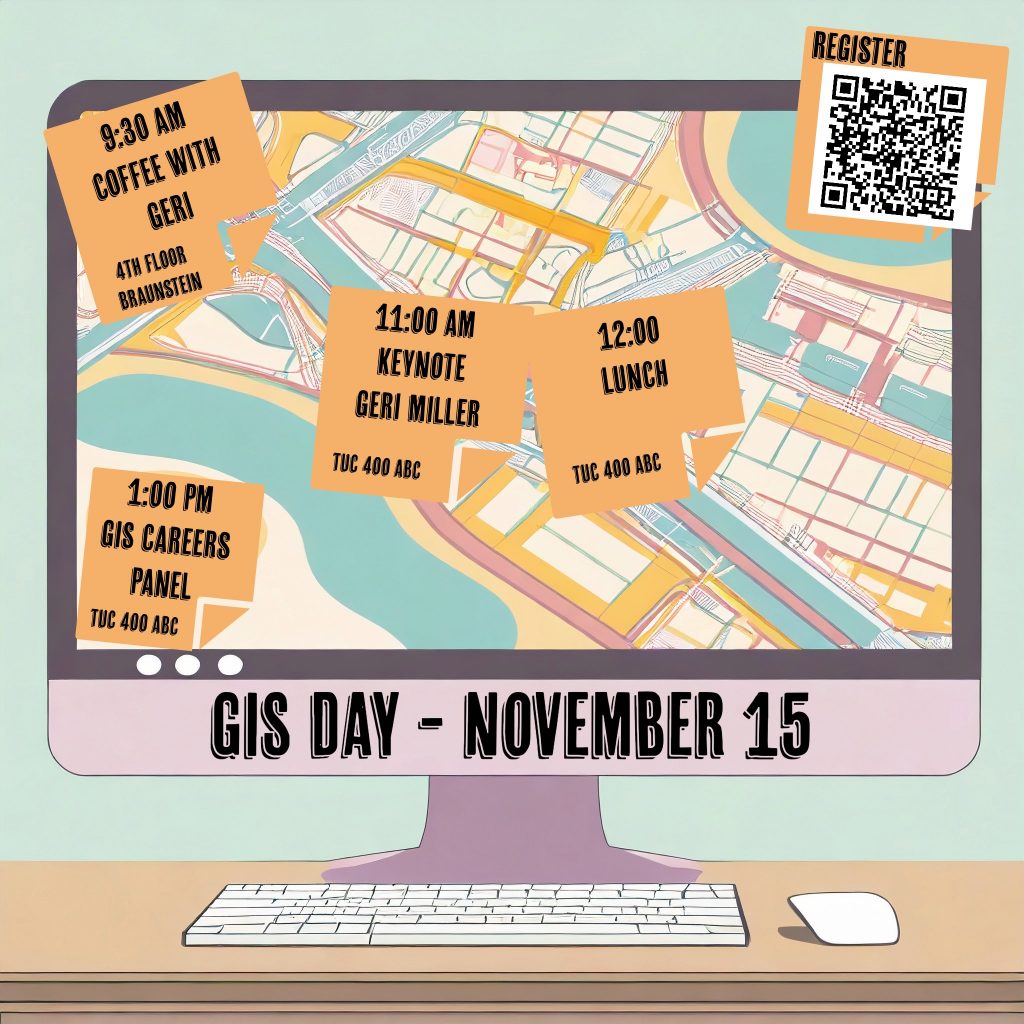

Join other UC GIS users for the celebration of National GIS Day.

GIS, or Geographic Information Systems, is a way of analyzing spatial data to identify spatial patterns, solve problems and better understand the world we live in. With GIS we can understand climate change, disease progression, population dynamics and other phenomena of our modern world.

Sponsored by the Provost’s Office, UC Libraries, Department of Geography & GIS, Geography Graduate Student Association, and the Joint Center for GIS and Spatial Analysis, the day features Director of Education for Esri [Industry Leader in GIS software], Geri Miller and a GIS Jobs Panel. The event is free and open to all. Lunch will be provided for all attendees.

GIS Day

Date: November 15, 2023

Venue: Location Rm 400 ABC / Tangeman University Center

11:00 Keynote Speaker Geri Miller, Director of Education, Esri – Talk Title – “Geospatial Education in the Cloud: Today’s Workforce Skills”

- Geri Miller is Director of Education at Esri. Her main role is to support academic institutions stay on cutting edge of geospatial technology. Prior to that, she was an Instructor and Technical Lead at Esri, specializing in online and onsite delivery of various geospatial technology courses. Ms. Miller is also an Associate Program Director for the Johns Hopkins University Master of Science in Geographic Information Systems program and has been a lecturer in the program since its inception. She has developed and taught a range of the GIS curriculum, including Web GIS, Spatial Analytics, Programming in GIS courses. https://advanced.jhu.edu/directory/geri-miller/

12:00 pm Lunch

1:00 pm Jobs Panel featuring

- Trisha Brush, MBA PMP GISP DTM (Director Information Systems and Analytics at Planning and Development Services of Kenton County)

- Kelly Wright, M.S., GISP (GIS Analyst at City of Monroe)

- Gabriela Waesch (GIS Analyst at OKI Regional Council of Governments)

- Madison Cox (Geospatial Data Scientist at Sanitation District No. 1 of Campbell and Kenton Counties)

- Madison Landon (Urban Planner at Woolpert)

Register for GIS Day in Faculty One Stop

Also please join members of the Department of Geography & GIS for coffee, pastries and conversation with the keynote prior to the official celebration

Venue: 4th Floor lounge, Braunstein Hall

9:30 – 10:30 Pre event Coffee and Donuts with Keynote

On Thursday, June 8, the University of Cincinnati Libraries Research & Data Services (R&DS) team will host a UC ORCID AWARENESS Day as part of the Data and Computational Science Series. We invite you to come to Rm 540B in the Faculty Enrichment Center, 5th floor of the Walter C. Langsam Library, to activate or enrich your ORCID profile.

On Thursday, June 8, the University of Cincinnati Libraries Research & Data Services (R&DS) team will host a UC ORCID AWARENESS Day as part of the Data and Computational Science Series. We invite you to come to Rm 540B in the Faculty Enrichment Center, 5th floor of the Walter C. Langsam Library, to activate or enrich your ORCID profile.